High-Voltage Feedback Control for Neutron Generator Yield Stabilization

Compact neutron generators are essential tools for homeland security, oil well logging, and materials research. They produce neutrons by accelerating deuterium or tritium ions into a hydride target, initiating fusion reactions. The yield of neutrons, which determines the sensitivity and accuracy of the measurement, is exquisitely sensitive to the energy and flux of the ion beam. These parameters are directly governed by the high-voltage potential applied to the accelerator column, typically in the range of 70 kV to 200 kV for deuterium-deuterium (DD) or deuterium-tritium (DT) reactions. Maintaining a stable neutron output over long operating periods, despite drifts in ion source plasma, target degradation, and thermal effects, requires a sophisticated high-voltage feedback control system that treats neutron flux as the primary process variable.

The fundamental challenge lies in the complexity of the transfer function between applied high voltage and neutron yield. The fusion cross-section is highly non-linear with ion energy, particularly near threshold energies. Additionally, the ion beam composition (atomic vs. molecular ions) changes with ion source conditions, altering the effective energy per nucleon. Traditional open-loop control, which simply regulates the high-voltage output to a fixed setpoint, is insufficient because it does not account for these variations in the ion source and target. A closed-loop system based on direct neutron flux measurement provides the necessary correction.

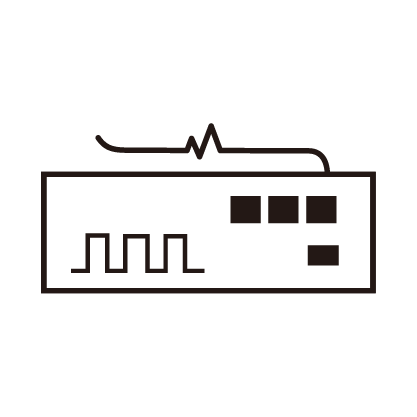

The feedback loop architecture begins with a neutron detector. This is often a pressurized helium-3 proportional counter or a fast plastic scintillator coupled with a photomultiplier tube, positioned at a fixed, reproducible geometry relative to the generator target. The detector electronics produce a pulse rate proportional to the neutron flux. This rate is digitized and compared to a user-set yield setpoint. The error signal is processed by a controller, typically a Proportional-Integral-Derivative (PID) algorithm implemented in a real-time embedded system. The controller's output is a correction signal applied to the high-voltage power supply's reference input.

This correction signal can modulate one of two parameters. The most direct is the accelerator voltage itself. If the neutron yield drops, the controller commands a slight increase in the accelerating potential, raising the ion energy and the fusion cross-section. This requires a high-voltage supply with a wide regulation bandwidth and exceptionally low noise, as the correction steps must be fine enough to avoid introducing step changes that could be mistaken for genuine signal variations in the measurement application. The second, often complementary, point of control is the ion source extraction voltage or the ion source plasma power. By modulating the intensity of the ion source, the beam current can be adjusted, providing another knob to control yield independently of beam energy.

Implementing this feedback is fraught with challenges. The neutron detector signal is inherently stochastic, following Poisson statistics. The controller must be designed to filter this statistical noise without introducing excessive lag that would make the loop unstable. The delay between applying a voltage change and observing the corresponding change in neutron flux is not instantaneous; it includes ion transit time through the accelerator and the statistical nature of the fusion events. This latency must be characterized and accounted for in the control loop design. Furthermore, the system must discriminate between genuine yield changes and artifacts caused by detector temperature drift or high-voltage supply ripple.

Another layer of complexity is target degradation. Over its lifetime, the hydride target becomes depleted of deuterium or tritium, and sputtering erodes the active layer. This results in a gradual decrease in neutron yield even if voltage and current are held constant. A purely feedback-based system would continuously raise the voltage to compensate, eventually exceeding the generator's rated specifications. Therefore, the control system must incorporate a model of target aging. It can track the voltage required to maintain a constant yield over time and generate an alert when this voltage approaches a limit, indicating the end of target life and the need for maintenance.

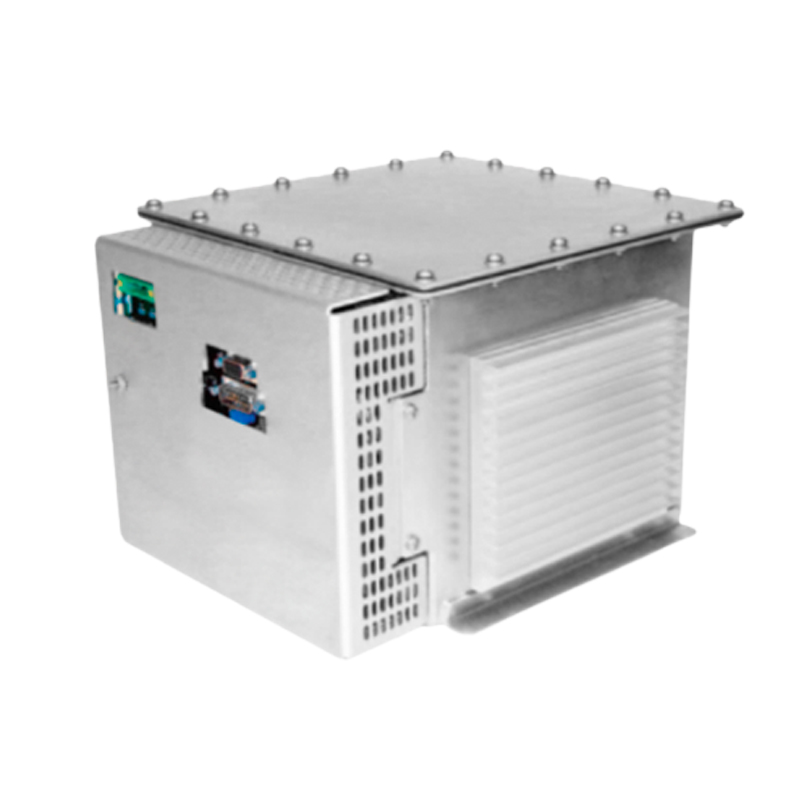

The high-voltage supply itself must be designed for extreme reliability in often harsh field environments. For oil well logging, the generator and its power supply must withstand intense shock, vibration, and temperatures exceeding 150°C. For security scanners, the supply must be compact, efficient, and capable of instant readiness. By closing the loop on the actual neutron output, the high-voltage power supply becomes the intelligent guardian of the measurement, compensating for the natural instabilities of the nuclear process and delivering consistent, reliable performance that is essential for applications where a false negative or an inaccurate measurement has significant consequences.