Life Prediction Model for Key Components of High Voltage Power Supply Based on Big Data Analysis

Reliability and lifetime prediction have become increasingly important for high voltage power supply applications where failure can have significant consequences. Traditional approaches to reliability assessment rely on accelerated life testing and statistical analysis of failure data. Big data analysis offers new opportunities for life prediction by leveraging operational data from large populations of power supplies. Understanding the application of big data techniques to life prediction enables improved maintenance planning and product design.

The key components in high voltage power supplies have distinct failure mechanisms and lifetime characteristics. Power semiconductors experience thermal cycling stress and electrical stress degradation. Capacitors degrade through electrolyte loss and dielectric breakdown. Transformers experience insulation aging and winding stress. Connectors suffer from contact degradation and mechanical wear. Cooling fans have bearing wear and motor degradation. Each component type requires specific prediction models based on its failure mechanisms.

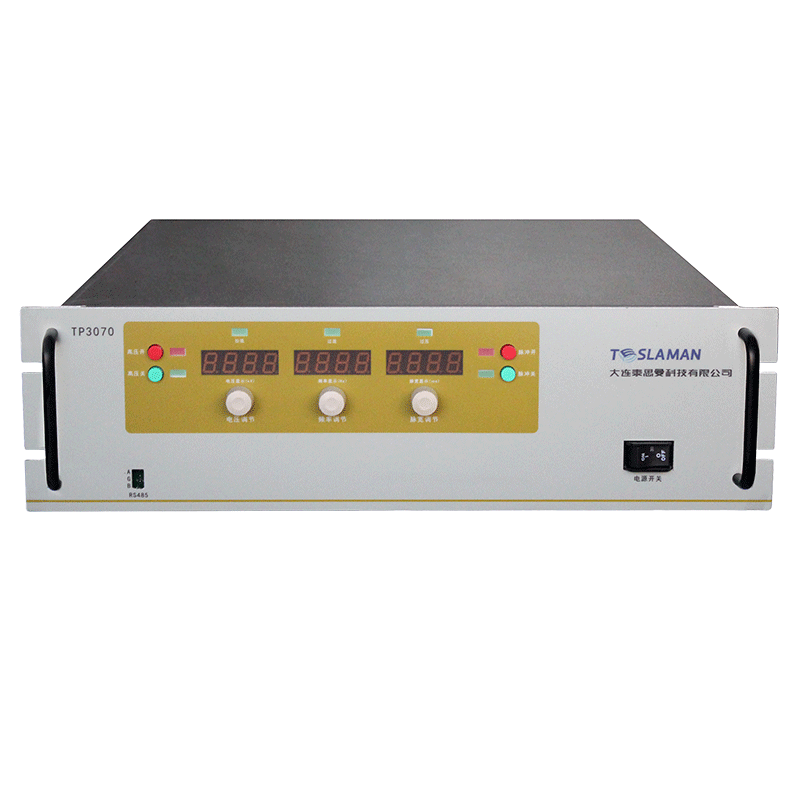

Data collection for life prediction requires comprehensive monitoring of operational parameters. Input voltage and current indicate the electrical stress environment. Output voltage and current reflect the load conditions. Temperature measurements capture thermal stress. Internal parameters such as switching frequency and duty cycle indicate operating conditions. Environmental conditions including ambient temperature and humidity affect degradation rates. The data collection system must capture these parameters continuously with appropriate resolution.

Data infrastructure for big data analysis must handle large volumes of time-series data. Data acquisition systems collect operational data from distributed power supplies. Communication networks transmit data to central storage. Database systems organize and store the data efficiently. Data preprocessing cleans and normalizes the raw data. Feature extraction identifies relevant indicators of component health. The infrastructure must scale to accommodate growing data volumes.

Statistical analysis of operational data reveals patterns related to component degradation. Time-series analysis identifies trends in parameter values. Distribution analysis characterizes the variability in operating conditions. Correlation analysis identifies relationships between operating conditions and degradation. Survival analysis techniques model time-to-failure distributions. Regression analysis relates operating conditions to degradation rates. These statistical methods provide the foundation for life prediction models.

Machine learning techniques enable sophisticated life prediction models. Supervised learning methods train models on labeled failure data. Neural networks can capture complex nonlinear relationships between operating conditions and degradation. Random forest methods provide interpretable models with feature importance information. Support vector machines handle high-dimensional feature spaces. Deep learning methods can extract features automatically from raw data. The choice of machine learning method depends on the available data and prediction requirements.

Feature engineering transforms raw data into predictive indicators. Statistical features capture central tendency and variability of parameters. Trend features indicate degradation progression. Frequency domain features reveal patterns in periodic data. Operating condition features characterize the stress environment. Interaction features capture combined effects of multiple parameters. Effective feature engineering improves prediction accuracy and model interpretability.

Model training requires careful attention to data quality and representativeness. Training data must include both normal operation and failure cases. The data must represent the full range of operating conditions. Class imbalance between normal and failure cases requires special handling. Cross-validation techniques assess model generalization. Hyperparameter optimization improves model performance. The training process must produce models that generalize well to new data.

Model validation assesses prediction accuracy and reliability. Historical data with known outcomes provides ground truth for validation. Performance metrics quantify prediction accuracy. Confidence intervals indicate prediction uncertainty. Residual analysis identifies systematic prediction errors. Validation must cover diverse operating conditions and failure modes. The validation process builds confidence in model predictions.

Deployment of life prediction models in operational environments requires integration with existing systems. Real-time data processing enables continuous prediction updates. Alert systems notify operators when predictions indicate approaching failure. Dashboard displays provide visibility into component health status. Integration with maintenance management systems enables proactive scheduling. The deployment must handle the computational requirements of prediction models.

Continuous improvement of prediction models requires feedback from operational experience. Actual failure events provide valuable data for model refinement. False positive and false negative predictions identify model weaknesses. New operating conditions may require model updates. Regular model retraining maintains prediction accuracy. The improvement process ensures models remain effective over time.

Application of life prediction models supports multiple business objectives. Predictive maintenance reduces unplanned downtime and maintenance costs. Spare parts inventory optimization reduces inventory carrying costs. Warranty cost prediction supports financial planning. Design improvement priorities emerge from failure mode analysis. Customer satisfaction improves through proactive service. The business value justifies investment in prediction capabilities.